If you want to extract Shopify store data to have product prices, reviews, as well as other data, you might do that yourself with a Google Sheet IMPORTXML formula. This is very easy as well as doesn't need any programming knowledge. Although if you want to scrape all the product data at a big scale, you might face limitations of a tool making that impossible to resolve the task.

In this blog, we’ll review all the possibilities as well as limitations of extracting Shopify as well as other e-commerce stores using Google Sheets functionality. Moreover, we’ll look at other solutions helping you to have complete products data for all your particular business tasks.

Use of Google Sheet IMPORTXML Formula

A bit of theory regarding the IMPORTXML functions from Google Help Center: “IMPORTXML brings in data from different well-structured data types like HTML, CSV, XML, TSV, RSS as well as ATOM XML feeds.” Sample use:

IMPORTXML("https://en.wikipedia.org/wiki/Moon_landing", "//a/@href")

IMPORTXML(A2,B2)

The IMPORTXML procedure is arranged within two arguments:

URLs of a webpage you propose to scrape data from.

XPath of an element where data is controlled.

XPath means XML Path Language as well as can be utilized for navigating the attributes and elements in the XML document. Additional in the blog we’ll exhibit how to recognize XPath of a page element (products name, description, price, etc.)

Now we’ll exhibit how to use this formula to scrape product information from Shopify stores.

Steps for Scraping a Shopify Store Data to Google Sheets

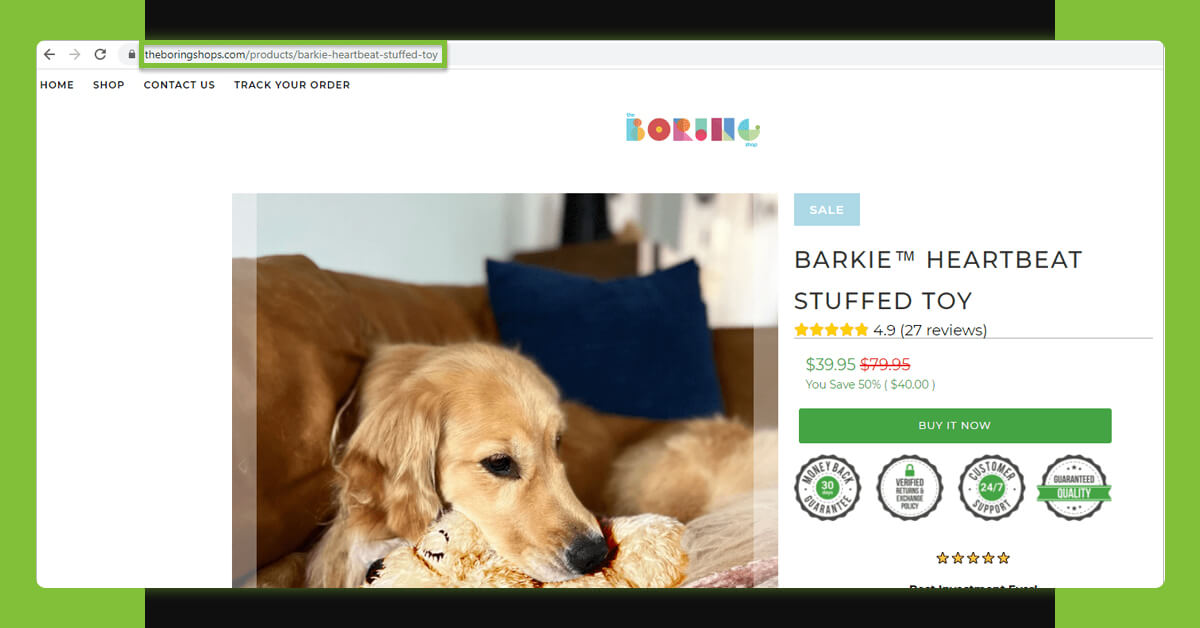

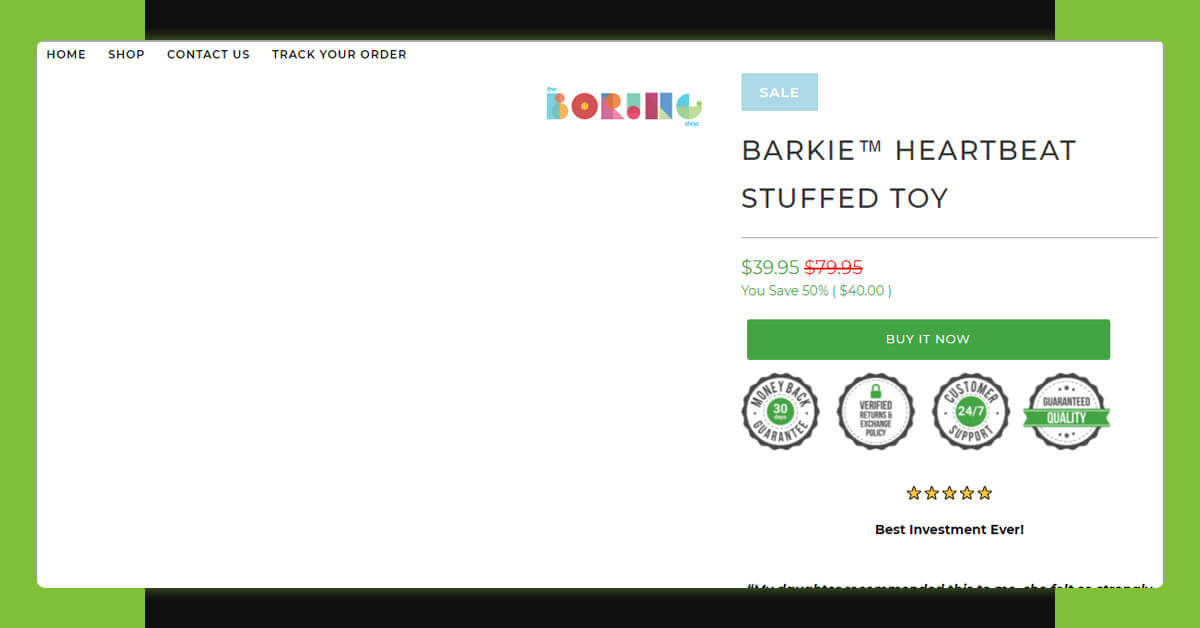

Here is the product page that we would try to extract:

Let’s start with retrieving the products title:

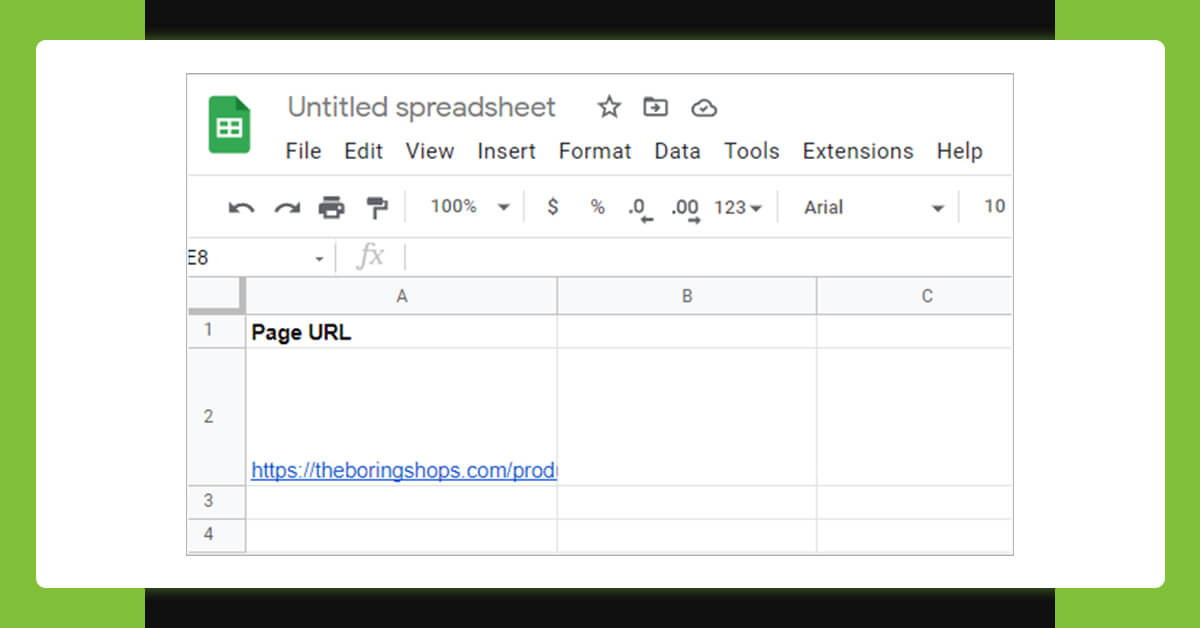

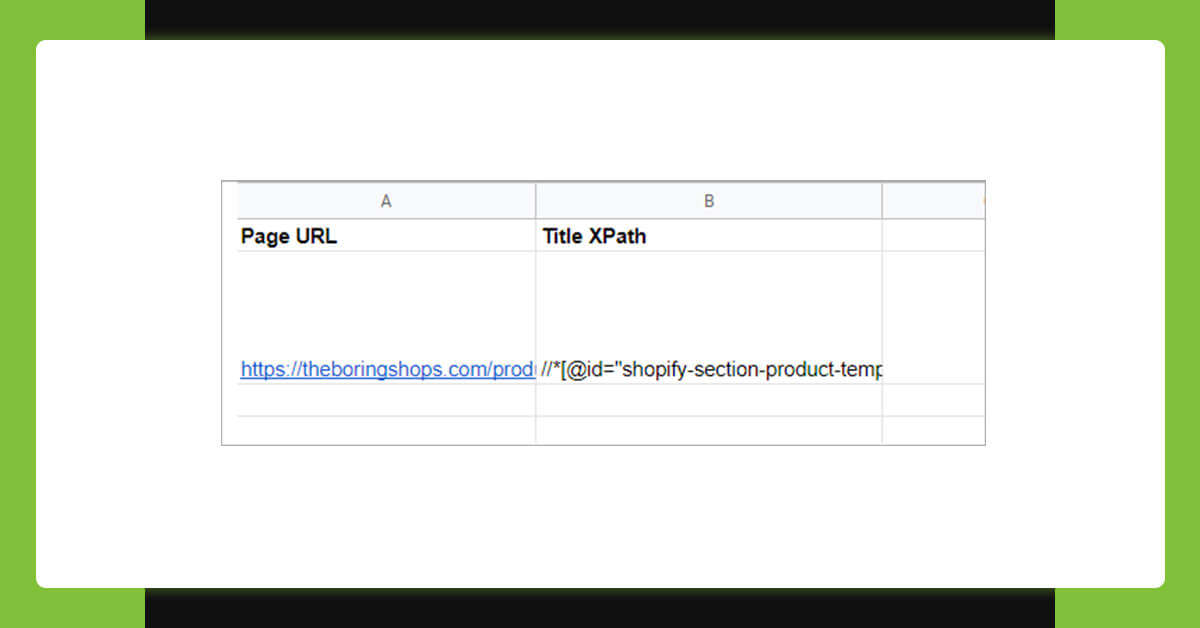

1. Make a Google Sheet.

2. Add column names as well as paste a URL.

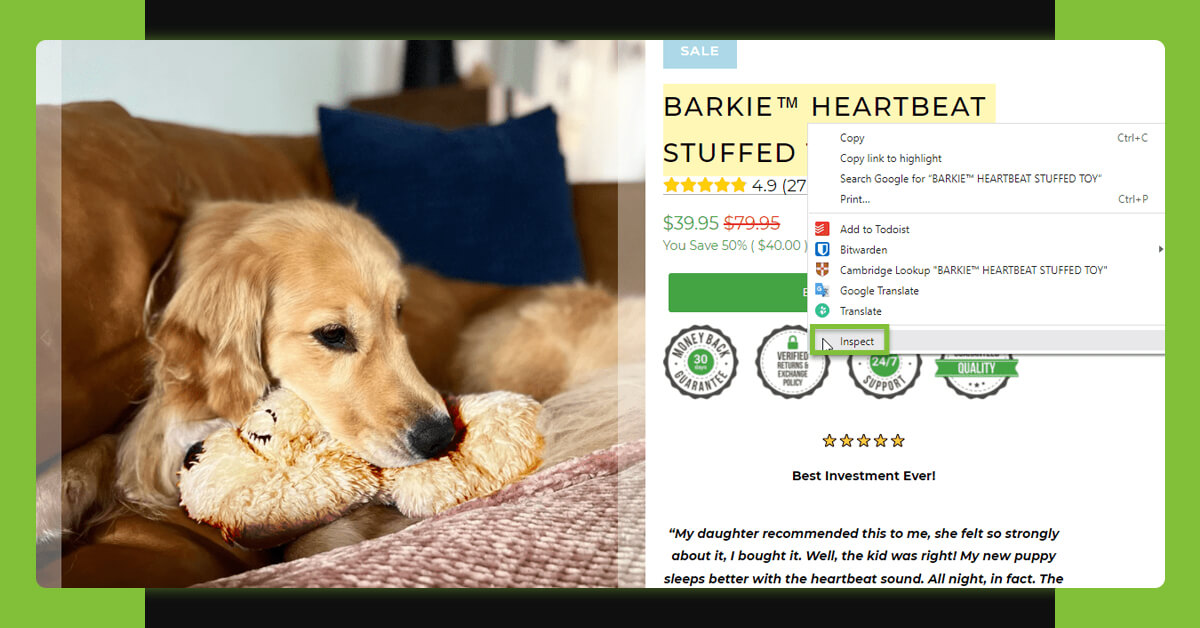

3. Get XPaths for products title. For this, choose the title and right click for bringing the menu as well as click on “Inspect”:

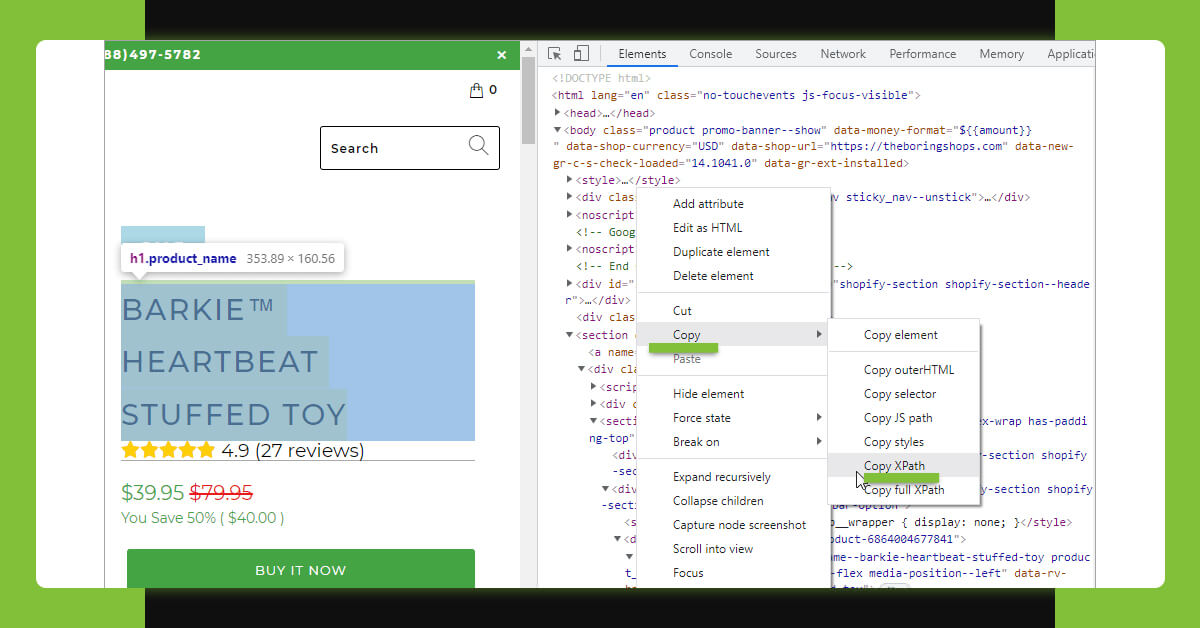

In Chrome dev tool, you would see highlighted text using tags. Just right click and in a menu, press the Copy > Copy XPath option:

Paste that to a corresponding cell:

4. Then apply a formula =IMPORTXML (URL, XPath_query) as well as press Enter.

*Choose corresponding cells for XPath_query and URL. In this example, it is cell A2 for a URL, as well as B2 for an XPath:

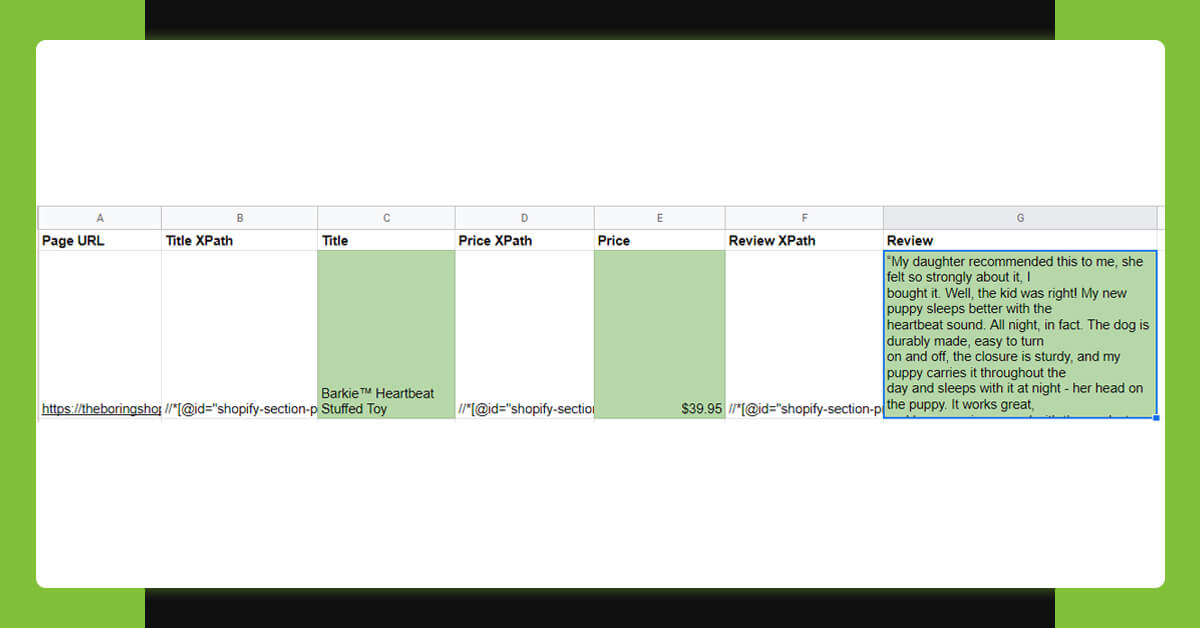

When a product’s name is given in a sheet, we could scrape product’s prices as well as review in a same way. For this, repeat the steps 2-4 for every entity. This is what we have in the document:

Why Does “Imported Content is Empty” Problem Take Place?

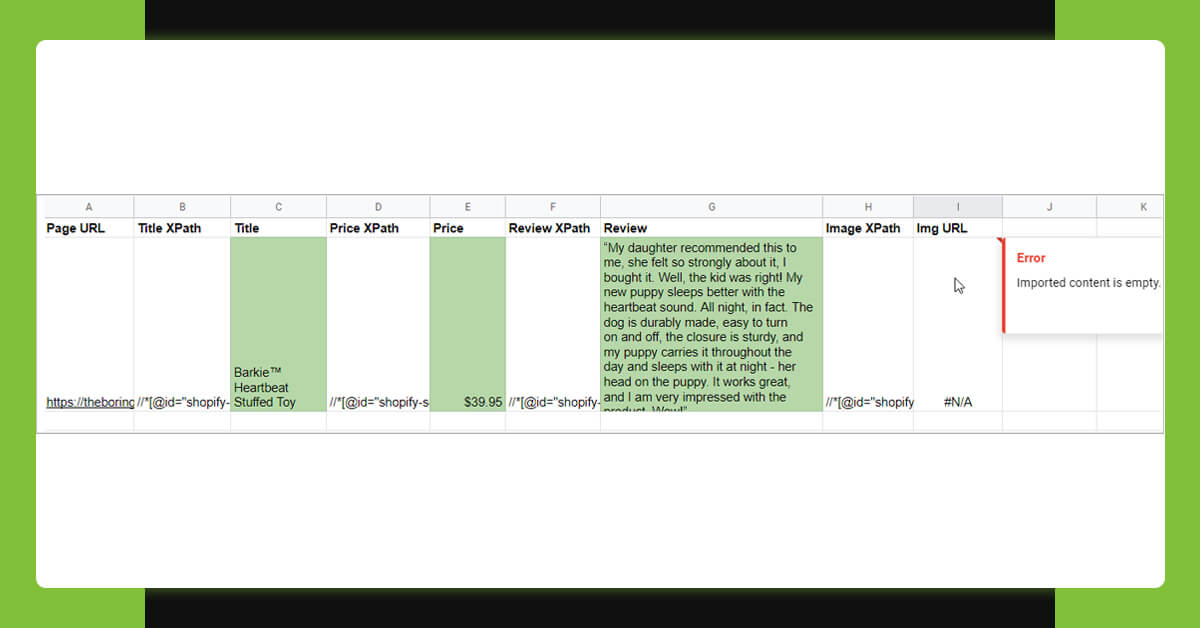

Whenever we try and scrape image URLs in the similar way although we get “Imported content is empty” problem (this could also get “the introduced XML content could not be parsed”):

These problems might be sourced by incorrect XPath. Try and choose the elements more accurately and copy the Xpath again. In case, the error continues, the reason might be that you try and scrape dynamic content. As well as Google Sheet formula won’t work for many dynamic websites.

Dynamic content consists of such types of UI interaction on a page like drop-downs having product variants, sections like “See more”, different images, pagination as well as others. To find dynamic elements, just try and disable Java for a page. Here, we’ve disabled the JavaScript for source sample pages as well as the images aren’t showed now:

That is why we couldn’t get its URL. Therefore we’ve fallen upon amongst the limitations of extracting an e-commerce website having an IMPORTXML function.

Limitations of IMPORTXML Functions

The largest limitation of importing formula is, you can’t extract dynamic pages, which load the content using Java or API.

Google Sheets as well as other static scrapers can extract the pages if the content is shown on initial page requests and not for the content generated after.

Some other limitations you might face include:

It’s not easy to recognize as well as correct the XPath query.

IMPORTXML & Sheets just cannot be utilized to extract large data quantities at scale (this would stop functioning).

Import formulas that cannot deal with the data behind password walls.

In case, you are planning to import the scraped data in the online store, it might also take some time for cleaning up data as well as prepare correct files structure to do import.

A Substitute Way of Extracting Shopify as well as other E-commerce Sites to Google Sheets

In different cases where it’s required to extract different dynamic websites using various structures as well as big data sets, some extra robust solutions need to be utilized. For these cases, you can depend on Retailgators’ data extraction services.

Retailgators associates powerful web scraping possibilities as well as manual tweaking about data as per the client’s particular requirements.

Here you will get:

Scraping of necessary data (identify it in order form the fields you want in result Google Sheets or attach file templates.)

Extracting all the data comprising dynamic elements: numerous products images, product variations, pages having pagination, etc.

Extracting of password-protected sites (provided you’ve given login information).

Cleaned data in a ready-to-import format. You may order a file to import for WooCommerce, PrestaShop, Shopify, Magento, and other shopping carts as well as ERP systems. Just mention a format and we’ll offer extracted data accordingly.

Let’s take an example of extracted data from a sample website using Retailgators. Here, you can see that Retailgators can scrape all the products data including different image URLs as well as image Alt-tags:

Here is the similar data formatted to do Shopify import. In the file, we’ve added columns from Shopify import templates and filled with the extracted data. Product descriptions come with the HTML tags as needed:

Contact Retailgators now! Send your request using given online order form as well as get all data scraped to Google Sheets easily!

Leave a Reply

Your email address will not be published. Required fields are marked